The start of the ESS

Max Kaase initiator of the ESS

Max Kaase initiator of the ESS

In 2001 the ESS started officially. The initiative came from Max Kaase and Roger Jowell but without support of representatives of different European countries and the European Science Foundation it would not have been possible. The idea was to perform a series of comparable surveys in European countries on different social and political science issues every two years. It would not be a panel but a series of cross sectional studies. The national coordinator of the research in each country would be a member of the steering committee of the ESS with as chairman Max Kaase. The Central Coordinating Team (CCT) would be the group of methodologists of different countries who would organize the data collection and control the quality of the work. Willem was invited to participate in the latter group. Herewith started for him an unexpected new academic life.

Roger Jowell the chairman of the CCT

Roger Jowell the chairman of the CCT

A challenging task

The CCT had the challenging task to take care of the quality of the data collection. I was wondering whether we really knew already enough for such a project; how we could take care that the questions had the same meaning in the different countries? Would the responses be comparable across countries? Would the measurement errors be the same across countries? These concerns I shared with Janet Harkness (Germany). Others, Jaak Billiet (Belgium), Ineke Stoop (Netherlands) and representatives of Zuma (Germany) were more worried about the comparability of the sampling procedure across countries. The Norwegian archive was chosen to take care of the archive of the collected data and all other information and to make this information accessible to possible users.

Roger Jowell and his team had the task to take care that we not only studied how to do this work but also generate a acceptable product.

Without trying such a project one will never learn to do it. We have learned a lot on the way. For my part, I saw the opportunity to apply the tool (SQP) to evaluate the quality of questions on the questions that were developed for the ESS.

Besides I convinced the CCT of the necessity to evaluate the comparability of the quality of questions across languages. For that purpose I had made a proposal to repeat some central questions of the main questionnaire in different forms in a supplementary questionnaire. In this way we could determine what the best form for these questions would be in this and other cross cultural research.

I foresaw a lot of interesting work for the next years. I also suggested that we could do in the Netherlands the first pilot study and could produce the evaluation report of the two pilots which would be done in The Netherlands and Ireland.

The CCT had the challenging task to take care of the quality of the data collection. I was wondering whether we really knew already enough for such a project; how we could take care that the questions had the same meaning in the different countries? Would the responses be comparable across countries? Would the measurement errors be the same across countries? These concerns I shared with Janet Harkness (Germany). Others, Jaak Billiet (Belgium), Ineke Stoop (Netherlands) and representatives of Zuma (Germany) were more worried about the comparability of the sampling procedure across countries. The Norwegian archive was chosen to take care of the archive of the collected data and all other information and to make this information accessible to possible users.

Roger Jowell and his team had the task to take care that we not only studied how to do this work but also generate a acceptable product.

Without trying such a project one will never learn to do it. We have learned a lot on the way. For my part, I saw the opportunity to apply the tool (SQP) to evaluate the quality of questions on the questions that were developed for the ESS.

Besides I convinced the CCT of the necessity to evaluate the comparability of the quality of questions across languages. For that purpose I had made a proposal to repeat some central questions of the main questionnaire in different forms in a supplementary questionnaire. In this way we could determine what the best form for these questions would be in this and other cross cultural research.

I foresaw a lot of interesting work for the next years. I also suggested that we could do in the Netherlands the first pilot study and could produce the evaluation report of the two pilots which would be done in The Netherlands and Ireland.

Evaluation of the Core questions

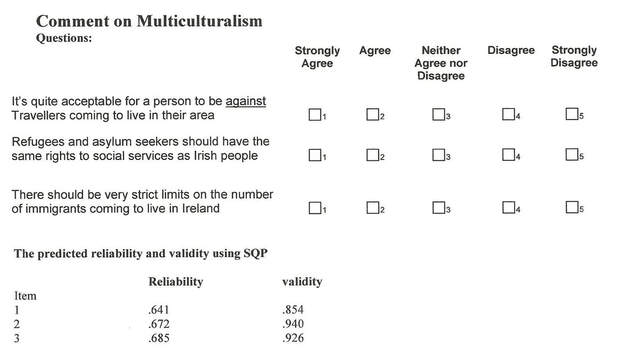

The ESS questionnaire consisted, and still consist, of two parts; a set of core questions that will be repeated every year when data were collected and a set of rotating questions that will be proposed each time by a different group of researchers. It will be clear that the core questions were our first priority to evaluate. For each topic a theoretical arguments for a number of specific questions was proposed by one or more experts in that field. On behalf of the CCT, Irmtraud and I have evaluated these questions using the program SQP. We evaluated especially the form of the questions. We already indicated in the first report that the Agree-Disagree questions using statements had a much lower reliability than direct questions where respondents are forced to make a choice. Below is a typical example

The ESS questionnaire consisted, and still consist, of two parts; a set of core questions that will be repeated every year when data were collected and a set of rotating questions that will be proposed each time by a different group of researchers. It will be clear that the core questions were our first priority to evaluate. For each topic a theoretical arguments for a number of specific questions was proposed by one or more experts in that field. On behalf of the CCT, Irmtraud and I have evaluated these questions using the program SQP. We evaluated especially the form of the questions. We already indicated in the first report that the Agree-Disagree questions using statements had a much lower reliability than direct questions where respondents are forced to make a choice. Below is a typical example

This table comes from the report we made for the CCT and was based on the coding of these questions using SQP making a prediction of the reliability and validity of these questions. Later this issue has been studied using MTMM experiments and it was shown that the difference in reliability and validity was very big (Revilla et al.).

We also commented on the formulation of the items for example that two different aspects were mentioned in the questions which can create problems (double barreled questions).

Our comments were sent to the experts and so a discussion took place till the expert and the questions design group of the ESS came to an agreement with respect to the final formulation of the questions. However in some cases a difference of opinions remained and for these questions a test was specified in the pilot studies.

We also commented on the formulation of the items for example that two different aspects were mentioned in the questions which can create problems (double barreled questions).

Our comments were sent to the experts and so a discussion took place till the expert and the questions design group of the ESS came to an agreement with respect to the final formulation of the questions. However in some cases a difference of opinions remained and for these questions a test was specified in the pilot studies.

The pilot studies

As we said above the pilot was done to test the quality of alternative formulations of questions. For this purpose the MTMM experiments were used. The pilot studies were also a test of the whole data collection procedure including the supplementary questionnaire containing the Schwartz value questionnaire and the repeated questions which were a part of the MTMM experiments.

In the Netherlands the SRF did this research. This was organized by Irmtraud using portable computers. Because the interview program registered the time the interview started and ended it was possible to detect that some interviewers filled in the questions themselves. One interviewer did this between 12 at night and 2 o’clock and nevertheless suggested that this were real interviews. We refused to pay him of course.

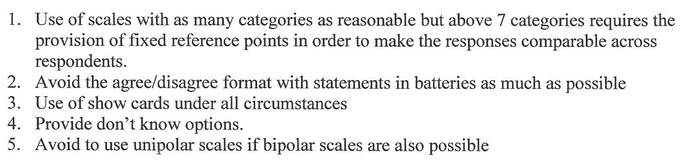

The analysis of the data collected led to following general rules for the formulation of the questions:

As we said above the pilot was done to test the quality of alternative formulations of questions. For this purpose the MTMM experiments were used. The pilot studies were also a test of the whole data collection procedure including the supplementary questionnaire containing the Schwartz value questionnaire and the repeated questions which were a part of the MTMM experiments.

In the Netherlands the SRF did this research. This was organized by Irmtraud using portable computers. Because the interview program registered the time the interview started and ended it was possible to detect that some interviewers filled in the questions themselves. One interviewer did this between 12 at night and 2 o’clock and nevertheless suggested that this were real interviews. We refused to pay him of course.

The analysis of the data collected led to following general rules for the formulation of the questions:

This process led to reformulation of many questions. For some questions there remained some doubt about the way to formulate them. For the most important questions a so called MTMM experiment was designed for this round.

The MTMM experiments in the first round of the ESS

The MTMM experiments in the first round of the ESS concerned the following topics:

Media use, Social contacts, political action, political efficacy, social trust, satisfaction with the government and the Schwartz value scale

For each of these topics the best expected form of the questions was chosen for the main questionnaire. Possible alternative forms of the same questions were included in the supplementary questionnaire. In the supplementary questionnaire first value questions of Schwartz were asked and after that the questions that were chosen to be repeated for the MTMM experiments.

Normally, in MTMM experiments all questions in three different forms have to be answered by all respondents. However, in order to avoid memory effects, there should be sufficient time between the repetitions of nearly the same questions. Between the questions in the main questionnaire and the first repetition there would be more than half an hour which would be enough but not between the second and the third repetitions. Therefore we designed for the ESS a new MTMM design where two random groups are formed. The first group get the first form of the repeated questions and the second group the second form of the repeated questions. This so called “Split Ballot MTMM design” has been used during several rounds of the ESS and has provided suggestions for the best form of the questions not only in one country but across all countries that participated in the specific studies.

This whole process has been documented in the archive of the ESS.

After all the problems at the university of Amsterdam this was exiting work and we saw new relevant and interesting research ahead of us for the coming years. This felt like the start of a new scientific research life for both of us although so far more for Willem than for Irmtraud but that also changed within a short time.

The MTMM experiments in the first round of the ESS

The MTMM experiments in the first round of the ESS concerned the following topics:

Media use, Social contacts, political action, political efficacy, social trust, satisfaction with the government and the Schwartz value scale

For each of these topics the best expected form of the questions was chosen for the main questionnaire. Possible alternative forms of the same questions were included in the supplementary questionnaire. In the supplementary questionnaire first value questions of Schwartz were asked and after that the questions that were chosen to be repeated for the MTMM experiments.

Normally, in MTMM experiments all questions in three different forms have to be answered by all respondents. However, in order to avoid memory effects, there should be sufficient time between the repetitions of nearly the same questions. Between the questions in the main questionnaire and the first repetition there would be more than half an hour which would be enough but not between the second and the third repetitions. Therefore we designed for the ESS a new MTMM design where two random groups are formed. The first group get the first form of the repeated questions and the second group the second form of the repeated questions. This so called “Split Ballot MTMM design” has been used during several rounds of the ESS and has provided suggestions for the best form of the questions not only in one country but across all countries that participated in the specific studies.

This whole process has been documented in the archive of the ESS.

After all the problems at the university of Amsterdam this was exiting work and we saw new relevant and interesting research ahead of us for the coming years. This felt like the start of a new scientific research life for both of us although so far more for Willem than for Irmtraud but that also changed within a short time.